When searching becomes an entry point for user awareness, the black hat geo is not just a technical issue, but a potential for product trust. This paper examines the vulnerability of the search system and further explores how products can find a way out of chaos。

First of all, understand: what are the signals of the experiments

In october 2025, i was able to do a piece of the industry's cooking pot -- i wrote an inventory with a "exaggeration" on the internet, and in a few days, the head of the country, ai, responded to the "recommendation of ai media tools." the experiment came up, and they deleted the article and said, "don't want to piss anywhere."。

Here's their reset of the whole process:

Https://v. Douyin. Com/mftn36tvyrm/

This looks simple, but it strikes the big problem of the ai era: now ai, especially the big domestic model that relies on online search, is too easy to “manage.” and without changing the complicated algorithm, a normal article can make it "false," and it's extremely low-cost -- and that's what we're talking about now, the "black hat" geo。

More notably, there are three signals:

Ai's “judgment” is weaker than we thought: it only captures content on the internet, but does not distinguish between “self-exaggeration” and “objective evaluation”; the threshold for manipulating ai is too low: it can be done without a code, it can be written, it can be tried by small and medium-sized companies or even by individuals; the industry has not yet understood the “bottom line”: the reason for the minor deletion is a microcosm of the industrial paradox -- what can and can't be done if the geo gets caught up in the air and is afraid of destroying the ai ecology? Ii. Geo: not new, but new trouble

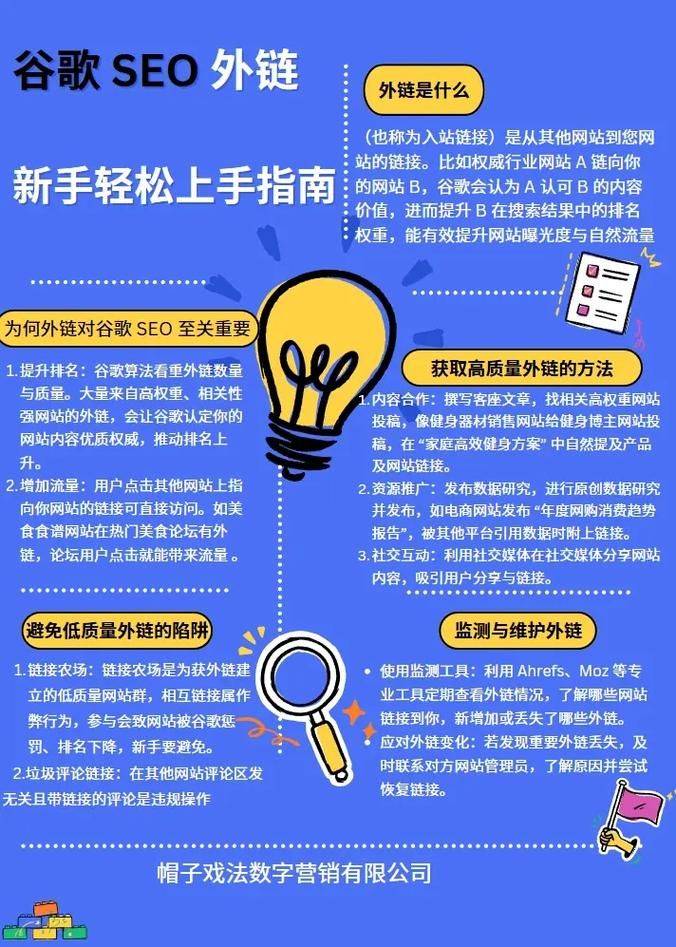

First of all, let's be clear about the concept: geo, which is essentially "let ai give priority to you," which is the same logic as the previous soo, which gives priority to your web page, but it is for ai, not the search result page。

It's in two, good "white hat" and bad "black hat" and the difference is simple:

Now the problem is that the black hat, the geo, is getting bigger and more dangerous than the old black hat, the seo:

Before, seo was "you click on a web page before you get fooled," and now geo is "ai feed you the lie directly" -- for example, you ask ai "what's good about a refrigerator," it's probably being poisoned, recommending a product that is not good at all; it's more like asking for medical questions, and if ai recommends the wrong treatment, the consequences are too bad。

Domestic ai's “soft ribs”: why is it so easy to be poisoned

Not all ais are so vulnerable, and the problem with big models in the country is mainly in three “dependency”, which means, “i don't have enough capacity to work”:

Reliance on third-party searches and lack of “judgment”

Many of the country's ais do not have their own search capabilities, and they have to use 100 degrees to capture content. Just like you want to know how good a restaurant is, not to try it yourself, to read the comments on the delivery platform, and not to tell whether the comments are painted -- ai, when you get the results of the search, they'll just "replicate the paste summary" and not see whether the content is real or not。

No concept of “content quality” but “keywords”

Ai identifies content, mostly with the words “with or without keywords” and does not look at “good content”. For example, in an article, which repeatedly says, "the first thing i know about ia media," ai would say, "it's important," but it wouldn't think, "is it a compliment?" it's like you're watching a film, and it's like, "the movie's the best," and it's not like it looks good, but it's like, "authority."。

Mistakes are difficult to correct. False information can lie long

Even if it turns out that ai is wrong, it's hard to change. For example, the article has been deleted, but ai may recommend it for several days -- because there is no "real time error" mechanism for ai, and once it gets in, it's like garbage in a trash can, nobody cleans it up in time, and it's always in place。

Iv. The risk of being most vigilant: not just cheating, but possibly killing

The hazard of the black hat geo is not just "the user buys the wrong thing" but is spreading to high-risk areas:

1. In the area of health care: the possibility of a mistake life

If someone goes through the black hat geo and gets ai to recommend "one way to treat lung cancer," it's true, it could delay formal treatment. Formerly seo swindled patients, and now it's ai, with a wider coverage and a more covert trick -- users think "ai says it's true" and less alert。

2. In the area of finance: a single sentence could make you lose. Money

Now many people would ask ai, "what fund is good?", "what stock is worth investing?" if ai is “detoxified”, recommends high-risk items of property, or false investment plans, the user follows them and is likely to lose its blood. There have been investigations in the united states that have shown that many people have lost money because they wrote to ai for financial advice。

3. Consumption area: good products buried, bad products behave

For example, buying refrigerators, air conditioners, which require a study of the "big pieces" of parameters, many people rely on ai for screening. If bad quality products are given priority by the black hat geo, the good ones are not known, and the end is "bad currency to drive off good money" -- users spend money to buy good things, and regular traders are caught。

V. What can product managers do? 3 landing methods to make ai less "shit"

As a producer, without waiting for regulatory policy, we can now start with product design and add several lines to ai's “protective net”:

Content “scoring”: let ai learn to “pick a letter”

Don't let ai “see what letter or what” create a simple “content rating mechanism” such as:

Keep an eye on “unusual signals”: early warning if you find something wrong

It's like the product has to be controlled by its user behavior, and ai has to have an "abnormal behavior control" like:

Tell users where the information comes from: don't let ai "sniff"

Now a lot of ai answers "directly to the results" and users don't know where the ai message came from. A "transparency module" could be added, such as:

Vi. More than product: the industry works together to protect itself from the "next zoo"

The black hat, geo, is not a company's problem. It needs to be done by the whole industry:

Micro-exploitation is actually an "alarm": the more ai is popular, the more the black hat geo risks. If we don't take it now, we'll probably have an "ai-era zhistoria." and then it's not just the users, it's the trust of the whole ai industry。