There's a common pain in being the station chief: the site interface is beautiful, keywords are stacked and the outer chain is sent out, but the search engine is not available, not even the core page。

Many people are eager to find seo skills, change titles, recharacterize, brush out their chains, turn their heads, and ignore one of the most fundamental issues — your website code, which may be the “murder” that drags in。

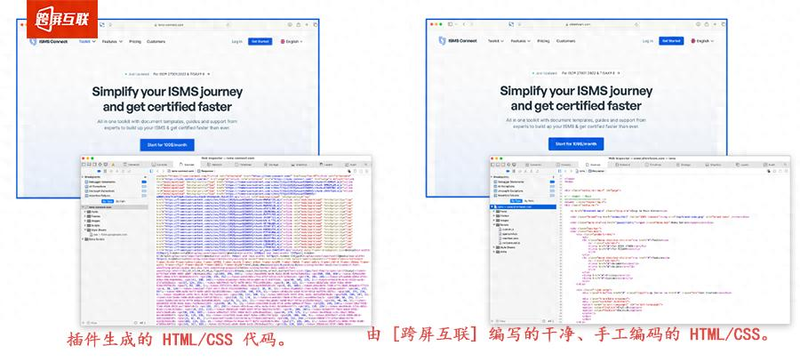

Drag/plug-in station: good looking, good looking, bad code. Look

It is now too convenient to build a station on its own, drag a station tool, various station plugins, a little mouse point, drag a little bit, and make an online web site with a face value. Without knowing the code, without looking for development, and without saving time, many smes and individual station chiefs preferred this approach。

But i keep telling the station chief that people look at interfaces, search engines look at codes。

The fine layouts and fluid animations we see in the eyes of our naked eyes, in the eyes of the search engine reptiles (e. G. 100 degrees spider, googlebot), may be a bunch of random, redundant codes。

To achieve "one-key generation", drag tools automatically generate a large number of useless redundant labels, duplicate codes, and even problems with label-laying disorder, js rendering anomalies; plugins are more numerous, with several more plugins, and codes are stacked, conflicting and, in some cases, pages are tampered withNical labels, which keep reptiles out of focus, even simply abandon their grabs -- after all, the reptiles have a limited time and need not waste their time on a bunch of "understood" codes。

The 100-degree open " 100-degree search optimization guide " has already made it clear that a simple dom structure would be easier to record. Those seemingly scavenging websites tend to fall into the code, and then how to make a surface seo, which is like the "airhouse attic" with a fragile foundation and a natural inability to record。

More than 10 years of station construction: code code is the "bottom code" for inclusion

It has been over a decade since the construction of the station across the screen and seo-related work, which has reached thousands of station chiefs, has seen too many cases of crashes due to code problems and has helped many clients to solve this problem。

We have always adhered to the principle of making websites, first code, first seo, first code optimization。

It's not that we're being defensive, but it's the experience of more than a decade that tells us that the readability of codes, the regulation, the direct determination of whether the search engine can “read” your website, the ability to access and index your pages, is the bottom logic of seo optimization — like building a house, without a secure foundation, with no good renovation, or even collapse. The seo trend in 2026 also shows that code optimization has changed from "plus" to "necessary" classes, and that every 10 per cent reduction in code redundancy increases significantly the speed of page loading and the probability of recording。

So we've been advising clients: try to develop a web site with a handwritten code。

Handwritten code, not for showdown, but for controlling code quality from the source. • remove redundant content, regulate label use, optimize the dom structure, replace the disordered div layout with wordy labels, so that each line of code is clear, simple and readable, consistent with search engine capture habits, and increases the speed of page loading。

Real case: when the code is remade, direct "reverse" is recorded

A few days ago, a client, a builder industry, a website with a drag tool, a sophisticated interface and a full product were made, but for most of the year, 100-degree entries remained in single digits, core keywords were not searched and traffic was virtually zero。

He found several seo companies, spent a lot of money, recorded nothing, and finally found us。

As soon as our technical team looks at the website code, the problem is clear: page redundancy codes are over 60%, a large number of invalid label nests, js rendering covers the core, reptile captures with only a bunch of blank codes and even HTML tag errors, and even the most basic canonical labels conflict with each other -- how can a search engine not read his website。

Finally, the client decided to redo the website here. We use our own built-in systems, hand-written code, not relying on any drag tools and redundant plugins, and strictly adhere to code norms: streamline redundancies, optimize loading strategies, regularize label levels, and adapt mobile ends to ensure that reptiles can easily access each core page。

After the line on the website, we're not rushing to do extra-chains, keywords, just basic code debugging and fine-tuning. The result was less than a month, 100 times the volume of his page began, and the core keyword slowly climbed to the front page, less than three months later, with the intake exceeding 500+, and natural flow more than a dozen times。

This case was confirmed once again: poor recording, not necessarily by seo, was probably delayed by your code。

Focus on alert: optimizing cross-screen interconnection code to get you through the "inspector ii"

A lot of station chiefs might say, "i don't know the code, i don't want to redo the website. What do i do?"

Actually, one of the core services that cross-screens is the optimization of the website code -- this is the bottom-up of the seo optimization and the most easily overlooked, but most directly effective, optimization。

We do not make any mistakes, and the whole process revolves around "let the search engine read your website" and optimizes the common code for drag/plug-in stations:

We do a code optimization, not just a "recode change," but a combination of more than a decade of station construction and seo experience, and we have perfected the two lines of "in-charge" — the codes that have been put in place for you. The search engine can easily capture and index your pages, the next key words can be optimized, the outer chain built can work, and the recording and ranking will naturally rise steadily。

I'll be honest with you

Be seo, don't rush. Instead of spending a lot of time and money on surface work, it would be better to get to the bottom of the code first。

People look at interfaces, search engines look at codes -- this is the bottom logic of building stations and seos, and the principle that we have been connected across screens for over a decade。

If you also encounter a lack of posting on the website, a lack of ranking and a lack of reason, you may wish to check your website code first. If you don't know the code, you can also find interconnections across screens, and we can help you to make professional code optimization, solve the problem at the root, and keep your seo road away。

After all, good code, good record; good record, good flow。