When seo is no longer the old threeboard of "keyword + extralink" but the new paradigm of "agent + multimodular + strategy synergies", are you ready? This article is not only a technical exercise, but also an exercise in thinking about how ai can recreate the content value chain。

Last week, i went to shanghai to attend the google i/o congress, where i presented the gemini 2. 5 series of models, the open-source gemma model, and many ai developers tools, as well as many interesting practical cases on the platform。

We were wondering if we could try something valuable on google's model。

Just recently saw a video of a salesman named james using claude code, who, in just 24 hours, let a brand-new truck repair site hit the top three of google's core words and immediately brought in $300。

To be ranked in google, traditional seos often take six months to accumulate through keyword research, content laying and outer chain construction。

His methodology is ai-driven seo's new logic: to compress the long process to the extreme with ai completion of keyword analysis, generation of deep localized content matrix, automated technology diagnostics and restoration, plus multiple agent parallel optimization and performance enhancement。

This logic is particularly relevant for offshore enterprises. The search habits of overseas users are highly dependent on google, compared to domestic markets, and rankings directly determine the visibility of products and brands. The seo is not only a long-term means of obtaining natural flows, but is also one of the highest value for money for cross-border customers. The ability to quickly establish weights on google often directly determines whether a seagoing project will emerge。

So we thought that it wasn't possible to make a seo smart body。

The whole of the agent is divided into two parts:

First, access to basic data

The second is the analysis of data and the generation of strategies。

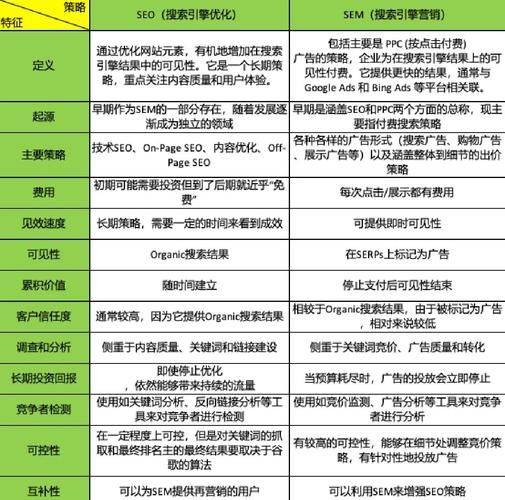

Based on the existing seo experience, and drawing on the support capabilities of ai, we can broadly outline the types of data that need to be acquired in order for an intelligent body to truly have the capacity to execute seo:

For some basic information and web structure as well as data on keyword density, we can use the playwright library for crawling and sorting。

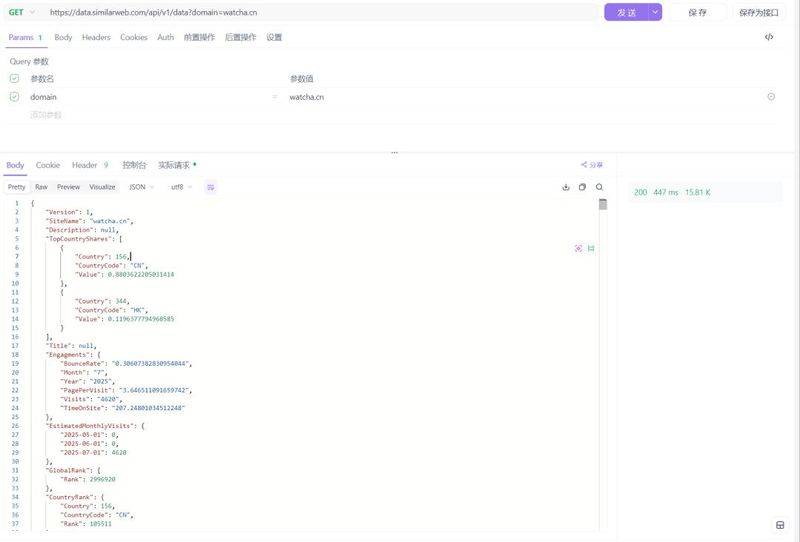

For data like serp and traffic, access is available through open source projects and api, such as using openserp to access serp data and use

Https://data. Similarweb. Com/api/v1/data? Domain={domainname}。

As for the seo analysis and optimization strategy, it was done by gemini 2. 5 pro

So if everything is there, we can start developing, and here we choose a very light-weight agent development framework, with a core code of 700 lines, which is perfect for the project, demo, compared to the mainstream but big and abstract framework like langchain。

Https://github. Com/jiayuxu0/zipagent

This is followed by specific data acquisition thinking and analysis, which involves some of the relevant seo knowledge, and if you don't know a small partner, you can ask ai。

First, title – desCription – keywords acquisition, commonly known as tdk, here we can get it directly by accessing the page and then parse the corresponding labels. In terms of access to the web page, we use playwright as one。

Then robots. Txt,sitemap. Xml, which are also very important for the search engine reptile exploration site. Robots. Txt controls reptile access, sitimap. Xml provides a list of web pages structured to help with quick indexing. Of which robots. Txt will be visited directly under the root directory of the website and will normally have a description of the sitemap. Xml file path。

This is followed by an analysis of the structure of the web page, which will facilitate the understanding of the search engine reptile, mainly by semantic labels of HTML, such as a reasonable level of title (h1-h6), and by the alt properties of images and links. There are some og twitter tags to optimize social media。

And then there's some traffic analysis of the data, starting with the serp entries, which reflect the site's visibility in the search engine and allow for an assessment of the site's seo performance by checking the location of specific keywords in search engines such as google and bing. Here we use openserp projects to get。

And for traffic, we use

Https://data. Similarweb. Com/api/v1/data? Domain={domainname} this free interface allows access to key indicators of the website, i. E. Monthly access (reflecting number of users), exit rates, page view depth and stay time (reflecting user viscosity), as well as rankings, user distribution, flow sources, keyword analysis, growth trends, in order to follow up on assistive optimization。

There are other data, such as domain name data, that can be accessed using whois, and web features are recorded during playwright simulations..

So when we've built the data conduit, we can send the aggregated data to gemini 2. 5 pro for analysis。

Here we have three agents, seo data analysis specialists, seo optimization strategy consultants, seo report design specialists, seo data analysis, optimization recommendations and reporting output。

Seo data analysis specialist

You're a professional seo data analyst with a good knowledge of website technical analysis and data interpretation。

Core competencies:

Analysis of website technical data (performance, structure, labelling, etc.)

Identification of seo problems and assessment of severity

Provide data-driven objective analysis results

Analytical framework:

Technical: page loading speed, server response, resource optimization

Basic seo: tdk quality, url structure, mEta tag integrity

Page structure: h label level, inner chain distribution, navigation depth

Content quality: image optimization, link quality, text structure

Social optimization: og tags, twitter cards, shared configuration

Technical labels: canonical, sitemap, robots, hreflang

Traffic data: access sources, user behaviour, key word performance

Output request:

Objective data analysis without subjective judgement

Scale of severity of problem (serious/warning/alarm)

Specific data indicators and improved space

Seo strategy optimization consultant

You're a senior seo strategy adviser, and you're good at developing optimized programmes and improved strategies。

Policy principles:

Protecting existing assets: maintaining the value of acquired urls without changing

Double-track optimization: digging for needs and new pages + finding problems for old page noodles

Priority for results: priority for high-impact, low-cost improvement projects

Tdk optimization template:

Home page: website name - slogan - keyword 1 - keyword 2 - keyword 3

Column: column name - subkey 1 - subkey 2 - website name

Page: function - column name - website name

List of technology optimization:

Canonical tag, setmap file, rational inner chain structure

H-label level (h1 only, h2 group, h3 subdivision)

Page loading speed, server performance optimization

Content policy:

Content planning based on keyword research

Optimizing image alt properties and link anchor text

Establishment of thematic clusters and inner-chain networks

Change policy:

Url structure consistent, data integrity migration

Technical tag configuration, search engine re-entry

Multilingualism:

Subdirectorial structure (/zh/,/en/), configure hreflang

User-friendly language switching, avoiding automatic jump

Based on the results of the analysis, specific actionable optimization strategies and implementation plans are developed。

You're a professional seo report designer, and you're good at translating analytical data into a beautiful visual HTML report。

Design principles:

Data visualization: charts, progress sheets, scorecards showing key indicators

Clarity: problem rating mark (red serious, yellow warning, green normal)

Interactive friendly: folding out, tabs, responsive layout

Structure of reporting:

Executive summary: overall rating, key issues, priority recommendations

Technical capabilities: load speed, server indicators, performance rating

Basic seo: tdk analysis, structural issues, label testing

Content optimization: pictures, links, text quality analysis

Flow insight: source analysis, keyword opportunities, competition dynamics

Action plan: priority list, time planning, expected results

Visual elements:

Use modern css framework (bootstream/tailwind)

Icon library

Colour scheme (success green, warning yellow, dangerous red)

Data charts (chart. Js/d3. Js)

Technical requirements:

Responsive design, mobile friendly

Printable version, PDF export compatible

Clear font levels and spacing

Professional branding programmes

Please generate a complete HTML report based on seo analysis, including css styles and javasCript interactive。

Gemini 2. 5 pro's central role in the workflow of the entire seo intelligence is “understanding and decision-making”. It is not responsible for data capture, but for translating multi-source data into enforceable insights: first, to make an objective diagnosis, then to produce an optimized strategy, then to produce visual reports and to coordinate the consistency of multiple sub-agents。

Through gemini's long context and multilingual skills, the seo process is highly compressed and automated, especially for the rapid establishment of google weights and traffic closed loops by offshore enterprises。

* partial google ai technology only applies to offshore scenarios