In order for a website to be located in front of the major search engines, it needs to be optimized by searching for search rankings under different keywords and thus getting visitors. This paper offers an example of how to optimize the work of the tuco website, which is intended to inspire you。

Background

Our main copyright sales business for material, including pictures, videos, music and fonts, is aimed primarily at big b clients. To improve sales data, there is a need to optimize the products of the website in a variety of ways, in the overall direction of “open-source cutting”, which is to increase the number of users accessing the site, while increasing the rate of conversion of key links on key routes and improving the data performance of the overall indicators。

Through historical multi-year data analysis, 60 per cent of users access the pc end-end windows system, 18 per cent from anjo and 13 per cent from mac os. Source channels were analysed, with 43 per cent of users of data throughout 2022 coming from seo (natural search traffic), 29 per cent from direct access domain names and 24 per cent from sem。

In summary, the aim is to open the source, focusing on seos and sems (direct access to domain names is mostly for older clients, meaning direct access to the website after the browser has entered the domain name). And the advertising costs of sem are high every year, mainly in the direction of optimizing the delivery strategy, as described in other articles, not to mention here. So seo is the mode of access to traffic that is discussed here。

This paper focuses on how, in a year's time, we can improve the search ranking of the site under the key words of the search engine through the optimization of the seo for the site, and thus gain a higher number of traffic, business opportunities, as a means of free traffic acquisition, which can be consulted by students who need it。

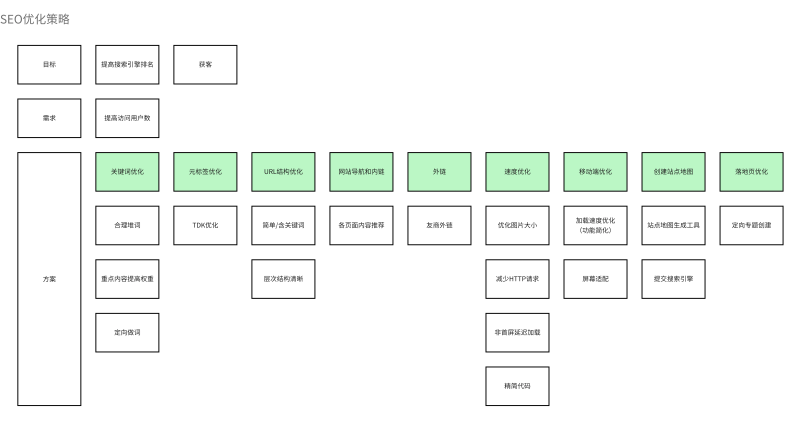

Objectives:

The goal of seo is to improve the ranking of the website in search of different keywords in the main search engines, and thus to attract visitors。

I. Seo rationale

In essence, seo optimizes the structure and experience of the website, allowing the site pages to be better crawled and recorded by reptiles in search engines, while obtaining a good web search engine rating through voting scoring mechanisms in search engines, and user experience testing mechanisms, thereby enabling its website to obtain a good sorting position from the search engine when users search for certain keywords, thereby increasing the exposure and thus achieving the target。

The search engine is actually simple, and the major search engine companies send multiple reptiles regularly or occasionally to access all the web pages on the internet, and for those that meet the standards, those that have a value are recorded (generally, in addition to those that are apparently cheating and illegal). Ratings are made for the pages on which entries are made. When users search the relevant contents through the search engine, the search engine returns the relevant pages to the user according to the key words of the call, and the order of the return results (without taking into account the sem advertisement) depends primarily on the relevance of the recall results to the user's search content and the respective weights of the recall results。

Ii. Elections of keywords

There is nothing more important to do with seo than optimisation of keywords, which are rated according to the frequency, location and importance of the keywords on the page, which will be used to match search needs of search engine users. So it's a very important seo job to help the page get a good ranking under the corresponding keyword。

First of all, make sure that the keyword matches the page and that there is no disparity。

Second, there are important layers of keywords on the page, for example, top to bottom, where the top weight is higher. The content weight in the h1(title) label is higher than in the p(paragraph) label。

It is desirable that core keywords appear on the page a reasonable number of times, noting that they need to be reasonable. Because the search engine has evolved over the years to have an anti-fraud algorithm, when there are malicious words found on the page, it may reduce the page rating, not record it, or even ban loses the entire domain name。

In the case of our library site, we would like to see the photo pages recorded, and we would like to see a good ranking of our pages in the 100-degree search for “photos”. So our title can be from the original "a woman," and it's code logic, and it's "a woman's picture." the key words below can be expanded from humans, women, humans: humans, women, humans. This ensures, on the one hand, that keywords, titles and page contents are matched, and, on the other hand, increases the frequency with which the theme of the picture appears on the page, so that the search engine will determine that the page is mainly “photogram”. We might have a good weight on our page when the search engine's users search for "photos."。

Iii. Monetic label optimization

Metalabel optimization, also known as tdk optimization. Students who have developed at the front end may be clearer, or you turn on the browser, click f12 or developer tools. What we see is a bunch of HTML code renderings. The HTML code is used to describe the page, as in an article where there's title, desThree key page descriptions for profile, keyword。

Tdk has to describe the main contents of the current page with simplicity and precision, and the search engine, when recording the page, gives a very high weight to what appears in tdk, as if you read an article, often starting with the title of the article, which is usually at the heart of the article。

For example, title on our photo page might be "a picture of a beautiful model," desCription may be "xxxx, original photo purchase", while keyword may be "photos, material, original picture, model, beauty"。

Iv. Url structure

The address of the page, or url, is also an important feature of the search engine. We need to be as clear, brief as possible at the url level and, if possible, include key words on pages。

For example, our front page at www. Xxx. Com, the photo search page (when searching for keyword cities) is www. Xxx. Com/image-search/chenshi, while the photo page at www. Xxx. Com/image/123513 is a clear hierarchical structure, readable and contains keywords or photoids。

V. Website navigation and inner chain

The search engine has an important mechanism for scoring the page, called the voting rating mechanism. For example, the a page search engine scored very high, and the a page gave hyperlinks to b page, so the search engine would think, "a page is so important, it's all connected to b page, so b page must be so important." the "a" page, "c" page and "d" page are all linked to the "b" page, and the search engine gives a higher rating to the "b" page。

That is why many websites provide easy hyperlinks between articles, pages and columns。

And the navigation is for reptiles. The reptile crawling page takes two forms: depth and breadth. One is to find a page on the page and then climb down, then find a lower one and then go down to a certain depth before returning to the original one. The breadth priority is to access all links to one page and climb down. But there's one feature in both ways, which is the need for a link between the pages as a path for spider crawling. And the navigation of web pages is the best thing to do。

There is therefore a need for very clear navigational settings, such as the head page of the picture, the head page of the video, the head page of the music, etc., all with clear navigation on the top of the website。

And since all websites have the highest first-page weights, whenever possible, pages that want to be crawled up and recorded by the search engine are posted on the first page。

We do this on the front page, with many recommendations on selected topics, links to selected pictures, displays and links to the most downloaded images of users, and displays and links to the latest images uploaded in the library. They are voted on on the first page, and these sub-pages are linked to each other for the purpose of increasing the score and weight of the relevant page search engine。

Vi. Exchange

The voting logic described above is not only internal, but also an important rating mechanism for the outer chain that goes outside. For example, station a has a higher search engine weight, and if station a has a link to station b on the first page, it is considered that station b is also a high-quality site (which is rated higher in this dimension). As a result, there is often an alliance of station chiefs who exchange valuable web links and vote for each other to boost the seo。

We did not optimize this in the actual seo process because, as a product in the head of an industry, we would be careful in choosing the outer chain and would therefore not be able to proceed purely from seo to exchange the friendship chain。

Vii. Speed optimization

Once the search engine recalls the results of the site, there will actually be a logic to monitor the rate of access and exit from each site. For example, 100 degrees of free-of-charge statistics, page-data equivalent to zero-point statistics, the site director simply need to introduce 100-degree codes to the head of HTML to facilitate access to data by users in the statistical station, and exchange the corresponding free-of-charge exchange, which means that the exit rate per page is known。

The search engine monitors the opening rate of the web page, which is the search/click conversion rate, i. E. The number of hits, of which more users click. With a higher rate of hits, the web page and user search terms are considered to be more relevant and would gain a better ranking。

The exit rate is an important basis for the search engine to judge the user experience of a web page, which proves to some extent that either the page does not match the search content or that the experience of the page is particularly poor if the exit rate is high (the next page of the same domain name is not visited by the user, and the page is selected for direct closure). If the rate of exit is high, the search engine reduces the rating of the page's search weight under the corresponding keyword, resulting in a lower ranking。

On the other hand, when the reptiles of the search engine crawl over the page, they also assess the speed at which the page is loaded (the actual rendering). The reptiles of the search engine do not logically load page resources like browsers and therefore do not directly measure the speed at which pages are loaded. However, the search engine has a number of ways and means of assessing the speed of uploading pages from side to side, for example, by assessing the time of downloading HTML, assessing the size of the page resources (js, HTML, css, graphics) in order to speculate on the time of loading, some tools for internal testing of the speed of loading pages。

In short, the speed of the page affects the work of the search engines in both areas。

One is the reptile of the search engine, which tends to crawl on each site for a fixed amount of time, such as two hours, two hours to the extent that it can be evaluated and recorded, so that the faster the load, the more it gets to the page。

The second is to assess the web experience, which, if poor, may reduce the weight of the site and the likelihood of entry。

In this regard, our product focus begins with the following:

Viii. Moving-end optimization

As mentioned earlier, about 20 per cent of the traffic comes from the mobile end. The desktop and mobile end weights of the search engine are rated separately. There is a separate set of rating criteria for the moving end。

The mobile end has more stringent rating criteria for page size and loading speed due to network, device limitations. Therefore, mobile-end optimization is primarily directed at the moving-end, with simplified page functionality, and a better fit screen meets the interactive needs of the moving-end。

Ix. Creation of site maps

The reptiles come to the site to climb and record, but it is certainly not appropriate to wait alone, and it is a very efficient way of making all major and secondary pages of the entire site available as site maps and then submitting them to the search engine。

First, the structure of the website, for example, our products have front pages, secondary front pages, search pages, detailed pages, thematic pages, which are the main content presentation pages. Site maps in the form of xml are then generated through site maps. The site map is submitted to the station header of search engines like 100 degrees and google。

Site maps need to be updated on a regular basis, especially if new pages are added and old pages are deleted. A large number of sudden page deletions became 404 and, if they were not reported voluntarily, could be seen as very serious by the search engine, which would reduce trust in the site and lead to a loss of rights。

For the deletion of pages, the following measures have been taken:

X. Improved landing page

After the pages were posted, there were two important points:

How to retain access users and direct them to improve the ranking of some desired keywords

Essentially, the landing page (the first page to be visited after entry) needs to be optimized. The landing page requires high-quality content that meets the user's expectations and also expands the user's browsing boundaries by means of photographs and recommendations on related topics, allowing users to insulated on a continuous stream of pages within the station。

To increase the ranking of certain keywords in a targeted way, in addition to the single-page stacks mentioned above, our approach is to group the same subject's picture into a topic, where the title, the subject's image containing the same content is displayed, thus forming a consolidated page. The convergence page is very helpful, both in terms of power to keywords and in terms of user outreach。

And the artificial long-term creation of topics has always been an efficiency bottleneck, so we have developed a series of topics that can be directly set, completing an automatic thematic capture system for high-quality content aggregations, and creating hundreds of thematic pages a day。

Optimizing outcome data

It's been almost a year since we started seo work. We have moved from the bottom of the list of search engine weights in the same industry (about 100 per cent) to now basically the top of the same industry weights (seven per cent, one per cent). Twenty-five million pages, 25,000 keywords for pc and 24,000 moving keywords, of which the first three keywords are pc 230 and 800 at the moving end。

The number of users visited by seo channels (january-november 2023) increased by 124 per cent over the same period (january-november 2022), the overall flow of traffic increased by 56 per cent over the same period and the number of commercial leads increased by 42 per cent. Much of the desired goal should not be followed by a major move, as it was found at a later stage that the earlier phase of the seo upgrade would have a clear effect, but that the continued expansion at a later stage would have some problems that could be described later。

Xii. Other issues

As for seo, how did it go? The results are done. But actually, it's a systemic problem. The growth of seo flows is not our central objective, but rather is to increase sales. However, the rapid growth of seos in the preceding period, the corresponding number of consultations, business trails (the definition of business trails, the definition of counselling, mqls, sqls and transformational links will be available for some time to say), has increased significantly, but as the later period continues to grow, the increase in consultancy and business trails has begun to decrease significantly, depending on the magnitude of the flows to seos。

One of the characteristics of seo traffic is that it is relatively uncontrollable (no absolute orientation of keywords), resulting in many ineffective traffic to the site, which puts pressure on its services resources. How to improve effective flows, filter ineffective flows, and increase the efficiency of the transformation of flows in subsequent transformation pathways, thereby contributing to monopolization, could be shared later on as other topics。

Summary

One year of seo work and success can be summarized as follows:

Summary overview chart

Finally, after the entire project is over, i'm going to take a look at what we're doing