Preface: for any enterprise that relies on web traffic, “site ranking instability” is one of the most worrying issues. The core keyword, which was also on the front page yesterday, is no longer visible today; monthly traffic curves fluctuate like rollers. This volatility is not an accident, but rather a profoundly re-engineered external expression that optimizes the bottom algorithm logic of the search engine。

In 2026, the optimization of the search engine was no longer a simple keyword ranking game。

From google to 100 degrees, from traditional search to ai-generated answer engines, the bottom algorithm logic of the search engine optimization is moving from “link-based authoritative assessment” to “trust validation based on entity understanding”。

The 2026 search engine algorithm

Three underlying logical changes

From "keyword matching" to "senior entity understanding"

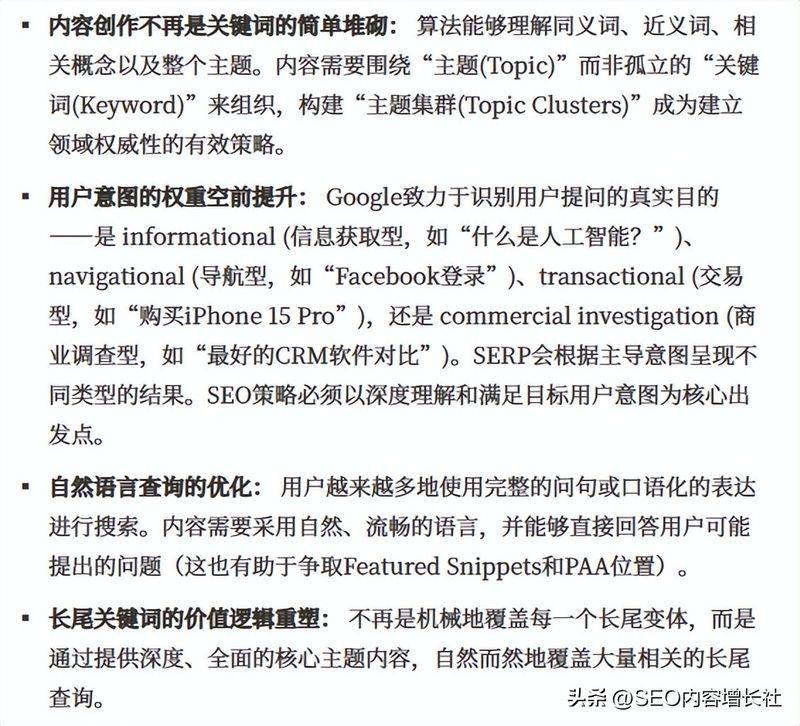

The bottom algorithmic logic of conventional search engine optimization relies on quantitative indicators such as keyword density, number of reverse links, etc. However, as natural language processing and deep learning technology matures, search engines can already “understand” content as humans do。

Google's core updates between 2024 and 2026 (including discover core updates in august 2024, march 2025, december 2025 and february 2026) clearly point in one direction: the e-e-a-t principles (experience, professionalism, authority and credibility) have become the core basis of the ranking. This means that the search engine is no longer satisfied with the inclusion of keywords on the page, but rather assesses whether the content really solves the user problem, whether it is made by creators with a professional background and whether it is endorsed by authoritative sources in the field。

Core change in google search engine algorithms 2026: from content quality to deep analysis of discover's new ecology

For business websites, this means that the era of reliance on mere keywords has ended。

The search engine analyzes whether your content forms a complete knowledge system and is semantically linked to other authoritative sources。

From website optimisation to ai content generating reference

Gartner predicts that by 2026, the flow of traditional search engines will shrink by 25 per cent, and new entry points, such as ai chat robots, will take up a large market share. That projection is becoming a reality. The bottom algorithm logic of the search engine optimization is moving through a paradigm that moves from “for hits” to “for quotes”。

Gartner identified top strategic technology trends in 2026

When the user asks questions in chatgpt, deepseek, bean bag or 100 degrees ai, the ai model does not return ten blue links, but directly generates the integrated answers。

The ai model selects the most authoritative source of information from the mass content by using rag (retrievation enhancement generation) technology。

This means that even if your website is ranked first in the traditional search results, if content cannot cross-check the ai logic, you may be completely absent from the answers generated by ai。

On the other hand, if your content is frequently quoted from multiple ai platforms, you will have the advantage of the "first touchpoint" where users get information。

From single point ranking web page to branded global visibility

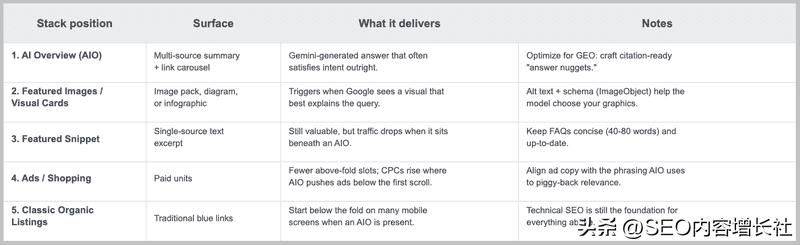

The ranktracker analysis indicates that the 2026 search results page is no longer a simple list, displaying the ranking of the website, but a composite structural link consisting of an ai overview, a selection summary, a knowledge panel, a forum summary, etc。

Search results 2026 page

A web page may rank seventh in the ranking of the traditional search engine, but appears in the ai overview directly visible to users。

The bottom algorithm logic of the optimization of the search engine has therefore been transformed into an assessment of the overall visibility of the branding on the search result page rather than the ranking of single web keywords。

Search results page

Technical factors that contribute to instability in the ranking of websites

The station where the word and content are diluted will be reduced

With the expansion of the website, many enterprises find themselves in a situation of “more content and more precarious ranking”。

It is difficult for the search engine to determine which page is most worthy of display when multiple pages are optimized for the same search intent, leading to a shift in ranking。

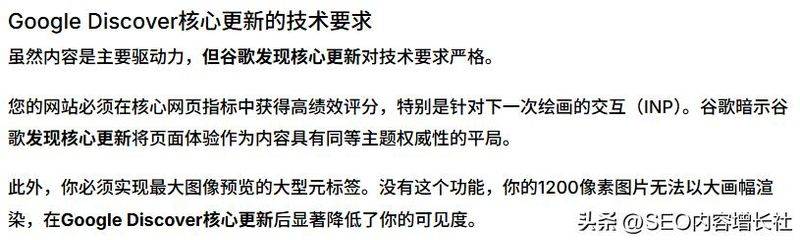

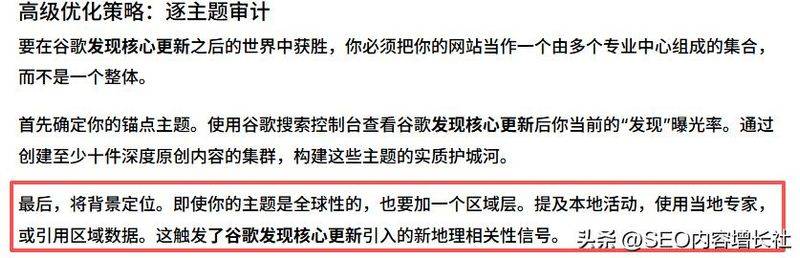

Google specified in the february 2026 discover core update that priority will be given to displaying websites with “in-depth expertise” in specific areas. This means that comprehensive content in general terms will be downgraded, while websites that form clusters of content around core themes will receive stable rankings。

Google's first `discovering' core update: a new strategy for virus flows

Traffic entry challenge: trust from ai generation

In 2024, it was made clear that a high percentage of ai-generated content or purely ai-based articles were its main target。

As ai generation tools become available, the challenge for the search engine is to distinguish between “value ai support content” and “valueless bulk waste content”

According to the chinese internet information centre (cnnic), the 57th statistical report on the development of the internet in china (hereinafter referred to as the report) was launched in kyoto. According to the report, by december 2025, our internet users had reached 1,125 million, with an internet penetration rate exceeding 80 per cent and digital development gains reaching a wider group; the digital economy had grown steadily, with the value added of the core industry increasing to 10. 5 per cent of GDP, and the pace of the digital transformation of the industry had been boosted; and the generation of artificial intelligence users had reached 602 million, with applications penetrating deep into life and production。

Network traffic markets are being re-engineered by ai。

When hundreds of millions of users are accustomed to obtaining answers through ai assistants (e. G. Mankind assistant, bean pack, deepseek, etc.), the conventional web search engine seo optimization needs to be extended to "ai search engine optimization" — whether or not your content can be accurately accessed and recommended by these ai applications will be a key battleground for the next phase to capture the minds of 600 million users。

On the other hand, corporate content that is entirely dependent on the volume generated by the ai tool, lack of manual monetization and fact-checking will be judged low-quality pages, resulting in a decrease in the overall weight of the website。

Paradigm shift - deep transformation of bottom logic of go0gle seo and subversive injection of ai

Localized and personalized signal enhancement

Google discover updates introduced the local content priority mechanism to display local content according to the user 's country priority. For enterprises serving a particular region, this means that ranking no longer depends solely on the quality of the content but is closely linked to geographical relevance。

Google's first `discovering' core update: a new strategy for virus flows

Similarly, the historical behaviour of users, the type of equipment, and the search scene are also being displayed in terms of impact ranking. This makes it possible that the search results for the same keyword that different users see may be completely different and that the traditional “absolute ranking” no longer exists。

Seo optimization of climber digital marketing

At the technical level, climbers have developed differentiated keyword layout strategies for mainstream search platforms such as 100 degrees, shivering, little red books, and so forth, with a cumulative optimization of over 100,000 keywords。

Provides clients with full coverage from core to long end words by continuously monitoring changes in search trends。

Shanghai crawler digital marketing core business

At the content level, climbers emphasize the creative concept of “artificial colour + value output”。

Faced with the policy of 100-degree, mandatory, large-scale ai model content learning resources against high ai-scale content, the team insists on incorporating industry insight, case data and practical experience on the basis of ai support to ensure that content is original and practical。

This strategy is particularly effective for b2b and b2-large category c clients — what decision makers need is not a broad industry presentation, but a vertical depth that can address operational problems。

Seo 3. 0 optimizing the digital marketing of climbers in shanghai

At the data level, climbers monitor changes in user demand and algorithm fluctuations in real time through tools such as the 100-degree index, search keyword analysis, etc. This dynamic adjustment capacity is the core safeguard against instability in rankings。

The services of reptiles cover such areas as seo optimization, smo brand dissemination, geo generation engine optimization, private commercial city development and digital advertising, and are aimed at helping enterprises to find definitive growth paths in the underlying algorithmic changes that optimize the search engine。

Shanghai climbers digital marketing service

The stability of the ranking of the website is no longer the result of speculative manipulation of algorithms, but rather the continuous accumulation of real values. When your content really solves the user problem, when your brand becomes a trusted source of information in the eyes of ai, ranking fluctuations are no longer a threat, but rather an important source of information for the ai search optimization strategy。

In this sense, the bottom algorithm logic of understanding the optimization of the search engine is to understand the rules for the distribution of voice in the digital world. Enterprises with new rules for changing flows will eventually gain a lasting competitive advantage over change。