“10 days of benefit 3000+, pure software generation, white can do”, “fine short novels written in a big model in seven minutes, all signed up” “the virtualization of material after work using a big model, which automatically takes 750 copies in half a month” opens social platforms, such as the publicity that is visible. Has it ever occurred to you that a significant portion of the online bomb article, the hot video, is not really in the hands of real people? More importantly, a significant proportion of the “industrial products” produced in bulk by ai are out of line with the facts and obtains benefits by fabricated attention。

The social platform has a lot of ideas about how to make money

A reporter's more than half-month survey found that a complete chain of fake industries covering the generation of ai content, the sale of teaching programmes, the fissures of flow, and the commercial liquidations had been formed, not only misleading ordinary internet users, but also having an impact on the normal web-based communications order and the business environment。

One, "two."

It is no longer unusual to use the large-scale ai model to generate texts and video-magazations, as revealed by the local letter and security services. In fact, in the whole chain of fake industries, it's only a starting point to create rumours using ai。

In particular, the entire industrial chain involves different forms of profitability, the most common of which is the use of self-media matrices by fraudsters to publish a large amount of ai-based content to increase their attention, both to profit from the platform's flow-sharing mechanisms or through ads, and to use it to market their services。

At the same time, a large number of businesses or individuals are marketing the "ai academy". They are well-versed in platform traffic and advertising distribution mechanisms, and then develop corresponding “ai generation courses” and related services, which can also be profitable by marketing courses and generating services。

Brokering agencies, such as mcn, have also become part of the chain of industry, with some guiding accounts generated through ai and others matching “market demand” for the relevant accounts — but some of the demand has been less glorified, including the blackout of some businesses, industries and even prominent personalities。

These profit patterns are characterized and interwoven, and at their core are “ai false”. In practice, the birth and spread of a rumour tends to pierce different chains of interest。

A case in point is the recent rumour by the ningbo city catering and cooking association of the “ningbo exiles collective downing platform”。

Retrospectively, the initial news of the “ninbo spill down” was a short video account focusing on marketing courses. The subject matter of an account is rapidly defunct because it is not a “silent battle”, but because more than 20 accounts with similar names have published information on a total of about 40 similar items. Together, they constitute the source of this cycle of rumours. And of the more than 40 messages, quite a few used the text and pictures generated by ai。

Further verification found that the main subject behind these 20 accounts was the same company, which operated as a provider of service training and commonly referred to as “selling classes”. The company has over 200 social platform accounts. An overview of the content of these accounts can be found in the fact that the main mode of operation is to sell the training course by the account anchor, after the news has attracted traffic。

The false news of “ninbo's collective downfall” is further spreading, and comes from a pile of accounts “pushing”. Retrospectively, during the week following the first set of false news, the text and pictures created by ai began to appear on various social platforms, with more than 100 rumours of different forms of “two-in-two” and, a week later, rumours of “the fall of the shanxi state out-sale collective”。

How much money can you make through eyeball? Through social platforms, journalists have purchased a “video-ai generation tutorial” called “10 days of benefit: $3,000”. After the next list, the reporter received a web link from the other party, which described how to remove the watermarks from other people's chicken soups, and to generate two-on-one images using ai, indicating that hundreds of them could be generated every day with a pair of tools. As for the “10 days of benefit: $3,000”, it is based on the platform's embedded advertising system, live delivery and microshop sales, mainly from advertising。

A screenshot of parts of the "ai academy" purchased by journalists

Those who know are more likely to write down a detailed account for journalists: rather than saying that the account accounts use attention to sell the profits of their own products or services, it is significant simply in terms of the distribution of traffic from social platforms and the placement of advertising revenues。

In the case of an implanted advertising distribution system for a social platform, a video account with 1,000 fans and a single reading volume of 1,000, if it supports the implantation, the number and location of the entries varies from $10 to $1,000. “don't underestimate a few dollars, because there are hundreds of accounts under the flag for businesses that profit from attracting eyes. Thus, the same subject, writing articles with different angles and details in ai, can be recognized as `original' by the platform, which in turn generates relatively high advertising revenues. Taken together, a rumour is likely to generate thousands of dollars in revenue.” the person warned。

There's a "teaching" and a "choice."

Some people make money on ai, others teach them to make money on ai, and others publish “demand” to covertly support for ai。

On a number of social and knowledge-paying platforms, courses such as the “ai blast writing secret” “zero base-trilling ai” are available at prices ranging from a few dozen to several thousand dollars; even accounts indicate that the ai course is offered free of charge。

Journalists contacted an account on a social platform claiming to be a grant for the “ai by-law” course. They introduced journalists into an “ai writing group” and marketed the ai writing site developed by their businesses. Using the site, which is paid word-to-word, the current discount is $9. 9, which generates 100,000 words。

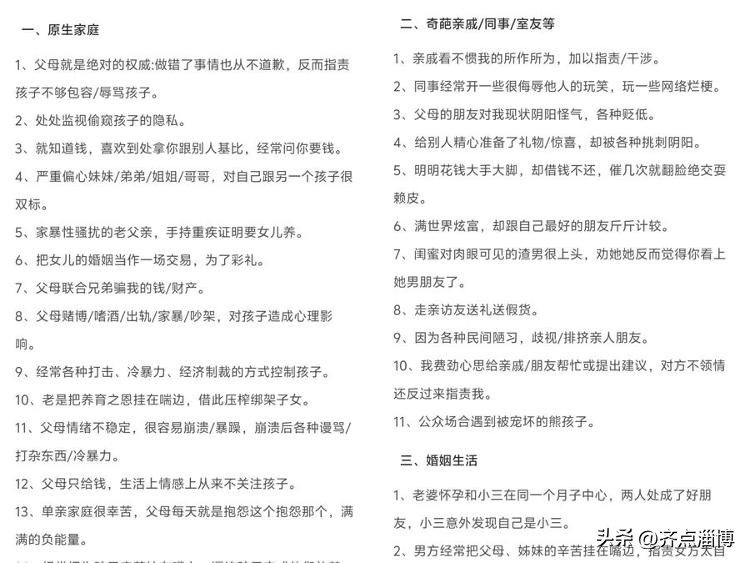

What is the text generated using ai? The client said he had to enter his own instructions to see the finished product. However, they provide references to “explosive material”, including “familiary crematoria” “generic family defects” “unusual relatives/colleagues/roommates”. The client service also indicated that the generation of relevant articles or videos using ai, in addition to obtaining a traffic split for posting on social platforms, would allow for more substantial contributions to some of the online literary platforms。

The subject of “ai article boom” (web screenshot) is provided by a course purchased by journalists

The journalist also contacted another “driver” in the marketing of ai's side business, and he said without a word: “the choice is the key to making money with ai.” to prove his “experience”, he introduced journalists to several core “choice directions”。

“smuggle” was his first advice. He stated that the use of ai to sell sad subjects was simple and that the common “show by the rider” was a general one, “the use of ai to produce what the rider had to eat in bad weather, misconstrued by his client, family difficulties to remain on the job, etc., and the combination of passions, which could easily trigger sympathy and transmission”. He also suggested that such selections could take the form of a “mixed picture” and that “a little street delivery scene would not easily be judged by the platform as ai generation”。

He also introduced the idea that, if you think it's the same thing, you can do it the opposite way - "someone's doing it the way they do it, you can do it the way you do it the way they do it, you can use ai to generate something like `a rider buys a limo at 30,000 a month', `shows a full house for three years', and you can make the topic hot with aversion."

“there is also a market for analyses of the type of `spirit chicken soup' based on hotspot events.” the “teacher” said that, in such writings, real events could be the subject, and then different styles such as “healing” “inspiring” “criticism” with ai, “the interpretation, analysis and extension of true events”. He also suggested that: “when the text is generated by the ai, you read it to the aphrodisiac, which looks like a real person, and it works better.”

The “teacher” stated that if a journalist buys a fee-paying service, he can also teach how to modify traces of ai-generated content, how to judge sensitive language circumventing platform approvals, etc.; and go further, “to introduce business propaganda, self-service orders, making more money”。

Thus, in the context of ai's fake profits, a complete grey chain of “teaching for sale - tool referral - content generation - commercial billing” has been created。

"to defeat magic with magic."

Is it true that the false content generated by ai spreads in cyberspace and is in an unsupervised vacuum? The answer is clearly no。

On 1 september last year, the artificial intelligence synthetic content marking scheme was officially implemented. The approach makes it clear that all ai-generated synthetic text, pictures, videos, etc., must be marked in a visible manner to ensure that users are able to clearly identify the source of content。

The reporter verified that the mainstream large model had implemented the rule initially at the time the content was generated: when the script was generated, the text automatically marked "into the ai-generated section" at the end; when the picture or video was generated, a semi-transparent "ai-generated" watermark was added to the corner of the content. At the same time, when this type of ai-generated content is uploaded to multiple social platforms, the release of the interface is accompanied by a pop-up window alert: “it is detected that the content contains the ai-generated elements and will be released after display of the required logo”, and compliance labels are enforced。

At present, a number of social platforms have made it mandatory to mark entries with obvious ai-generated traces

However, the counterfeiter is also summarizing the technique of “de-marking”: text-type content directly deletes the end-end ai notation; picture-type erases watermarks by cutting, stretching or slightly decorating; video-types use the cut-off tool to cut the marked clips; and, further, remove the ai tags in the file attributes by adjusting the format or secondary code ... After the above processing, some ai-generated content can be posted on social platforms as “original” and difficult to identify by ordinary users。

How should the path to governance proceed in the face of such regulatory circumvention? The answer given by ai industry practitioners, especially ryo, is to further develop the power of “magic defeating magic”, i. E. Fighting ai with ai technology. He also presented a real case:

Recently, there has been a false news on the extranet that the outside rider is the main character. The message included text stories, photos of the rider's work and a short video on the theme “the delivery platform assigns orders based on the rider's level of `poverty', for example, by distributing more difficult and lower-paying orders to the poorer riders, since poor riders will not refuse”. The local media verified the news using the authentication capability of a large model and found that some of the larger models believed that the text was generated by ai, but some of the larger models considered the text to be written manually. However, large models, in identifying images and videos used for messages, point to the existence of “ai generation footprints” that are invisible and undamaged to the naked eye. Eventually, the news was judged to be false。

Yu chong explained that the core of this identification success lies in the growing number of large model developers who have developed identification tools that capture the hidden features of ai-generated content with precision. A number of scientific and technological enterprises and research institutions in the country have also developed specialized ai content testing tools. These tools build multi-dimensional recognition models through in-depth analysis of the semantic pattern of the text, the symmetry of the sentence, logical consistency, as well as the photology of the picture and the physical details of the person. While the accuracy of identification cannot be 100 per cent, there is a strong reference value。

“to go further, more ai will produce invisible hidden watermarks.” yu chong said that the hidden watermarks did not affect the visual effects of content and were resistant to routine modifications such as tailoring, coloring, transcodering, etc. It is therefore important to promote invisible watermarking as a tool for ai generation and to build a first line of false defence at the technical level。

Is the platform able to access a large “mass” model

The enhancement of technical identification capabilities will also require the platform to be a “taker” of identification capabilities。

According to the survey, the “ai by-products” who use ai as a profit-making tool are constantly exploring ways to “reflect label” and some platforms for information dissemination and literary creation seem to be a reflection of ai's ills, so that fraud makers can still profit from the platform's incentive mechanisms。

The solution to this problem, i am afraid, is “old talk”: urging the platform to hold itself accountable and to implement the ex ante review mechanism. This time, it was possible to establish a regularized review process for “pre-issuance detection”—suspecting early warning of content-conforming content interception” using technological power — access by the platform to mature ai-generated content testing models。

In particular, real-time testing of user uploads of texts, pictures, videos, etc., directly intercepts the presence of false and unmarked ai content and does not allow it to go online; and the imposition of horizontal penalties for the repeated publication of false content in violation of the law. Especially with regard to high-frequency hot spots in “ai by-product” training, there is a need to strengthen targeted audits, clean up false content in a timely manner and curb poor orientation。

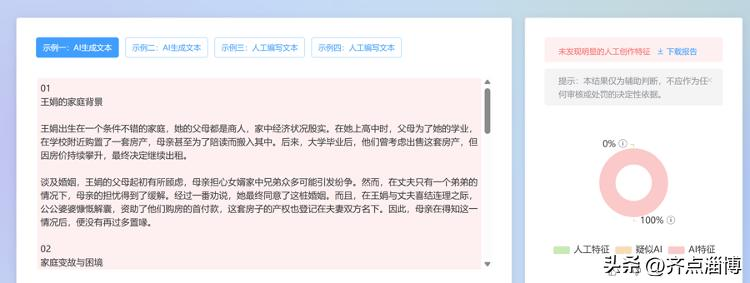

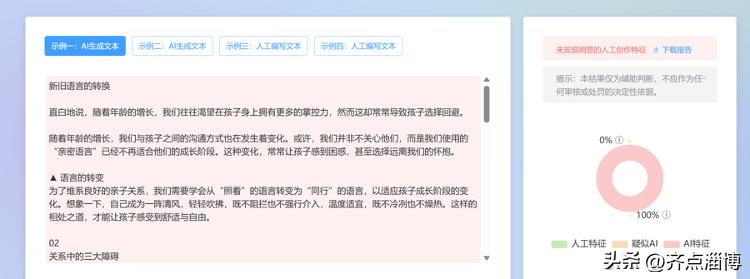

In order to verify the effects of identification, journalists have also selected a number of two-in-a-half copies of hotspot events from different media outlets, which have been submitted to a number of large national models for identification. The results show that a significant proportion of the “spirit chicken soup” is identified as “no artificial traces” or “highly suspected ai generation”. These self-messaging accounts are often linked to the same subject. It is not difficult to speculate that they were the ones who wanted to profit from the ai-generated articles。

Journalists tested some of the media-published “heart chicken soup” with a national identification model and found high ai concentrations. Industry has indicated that the results of the tests, although not 100 per cent accurate, have greater reference and that the accuracy of ai identification can be further improved as technology advances (web-screening)

Imagine, if the platform were to be released with early access to the large identification model, would it reduce the spread and spread of rumours from the source by marking the content generated by ai at the beginning of the information release and laying down information suspected of being misleading

Of course, governance of ai cannot be based solely on regulation and technology, and the ability of the public to identify is equally important. In the age of the explosion of information, in the face of the explosion of the network of 100,000 plus, the seemingly curable rooster soup of the heart, the public must also maintain its rational judgement and not blindly believe in it and transmit it at will. If the mere resonance of emotions is easily transmitted, it is likely that the false content generated by ai will be confused into deeper information cocoons. (shanghai network rumours)