In short, markov chain is a mathematical model used to describe a random switch between objects in different states。

“the future depends only on the present, not on the past.” this is known as markov property。

For example, we play the big rich or the flying chess:

The next steps will depend only on which grids are currently standing and on what dice throw。

What bends and turns were used to reach this grid, which has no effect on the next step。

That's the essence of the markov chain。

In professional terms:

In what is known as the “now”, the “future” is independent of the “past”。

A marcov chain usually contains the following elements:

State: possible situation of the system. For example: a clear day, a rainy day; or a specific page on a web page。

State space: a collection of all possible states。

Transfer probability: the probability of a change from one state to another。

For example, if it's clear today, there's 70 percent probability or clear day, 30 percent chance of rain. This 70% and 30% is the transfer probability。

The marcov chain is the first random process of probabilistic theory to be systematically studied and is now widely applied in various fields:

Search engine (e. G. Pagerank algorithm for google): viewing the internet as a state space allows users to click on the link at random as a marcov chain to calculate the importance of a web page。

Natural language processing: predict what the next word is (although the current ai model is more complex, the early n-gram model is based on this)。

Queuing: bank queues, internet package transfers, can all simulate waiting times using the markov chain。

Bioinformatics: for analysis and prediction of gene sequences。

The marcov chain, which allows complex long-term prediction problems to be addressed through matrix calculations (transfer probability matrices), is one of the basic tools of modern data science and algorithms。

A matrix is used to decipher the marcov chain, and the core is to organize the shift relationship into a transfer probability matrix and then calculate the future state probability by multiplying it。

This approach can make complex and incremental relationships very intuitive and systematic。

Let's show you how it works from a simple "a" competition。

In the marcov chain, we list all the states, and then we build a matrix p, where the element pij indicates the probability of moving from state j to status i。

Note: some teaching materials are defined as from i to j, where the column vector custom is used, i. E. The state vector left multiplied matrix。

1. Definition status

The state is a "score of more than one ":

Status 0: ratio difference - 3 (b win, absorption state)

Status 1: ratio difference - 2

Status 2: ratio difference -1

Status 3: difference 0 (start point)

Status 4: ratio difference +1

Status 5: ratio difference +2

Status 6: ratio difference +3 (a win, absorption state)

2. Building transfer probability matrices

Probability of winning per round p = 2/3, probability of winning b = 1/3。

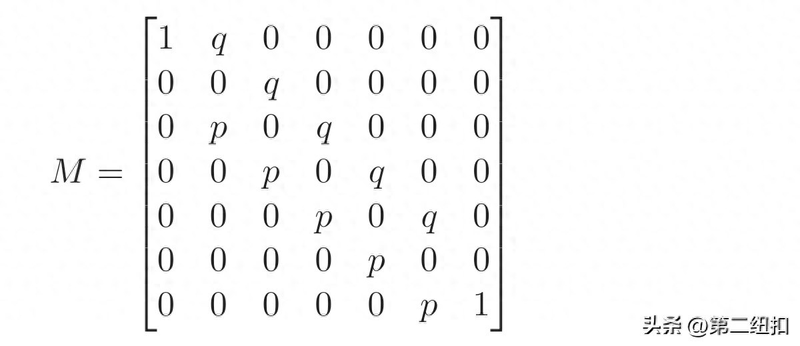

The size of the matrix m is 7 x 7。

Represents the probability of moving from state j to status i。

Absorption: once in, it won't come out。

=1

= 1 (a wins, game ends)

Intermediate:

For example, in state 1, margin 2:

Probability of having p to become state 2, margin 1

There is a probability that q will become a state 0, with a differential of 3。

. A matrix is constructed, expressed in numbers:

Solution: basic matrix

There is a standard solution for the marcov chain, which contains a absorbent state。

Rearranged matrix (optional, easy to understand)

Usually we put the absorbent state in the front, and the matrix can be in the form of a block matrix:

I: unit matrix (absorption self-conversion)。

Q: transfer between transients。

R: probability of transient to absorption。

2. Basic matrix for calculation

Calculate n = (i-q)-1

Here's the n called basic matrix。

Indicates the number of expectations from state j that passed through state i before being absorbed。

3. Calculation of absorption probability

The final absorption probability b is:

B = n x r

Matrix b

Is the probability that from the moment of j, it will eventually be absorbed by i。

Although using a matrix to solve this problem is a bit of a “cow knife” to kill a chicken, it can be understood in greater depth。

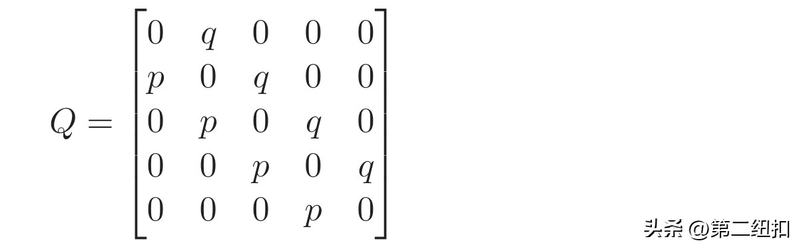

Next, we focus only on transients (two, one, one, one, two) and their probability of falling into an absorbent state (three or +3)。

Sets the q as a transient matrix (lines and columns correspond -2,1,0,1,2):

And r, for the probability of falling from an instantaneous state into an absorbent state:

First column (fall) - 3: the a is only from -2 points, and the odds of losing another game fall in。

Second column (fall into +3): the ace is only from +2 points, and one more game falls in, with the probability of p。

Calculating steps

1. Calculation i - q

2. Counterbalancing matrix n = (i-q)-1

3. Calculate b = n x r

In result matrix b, the line corresponding to the start state is found。

- three

1/9, probability of a loss。

+3

8/9, probability of winning。

There are many complex issues in the state, such as the success of the chess ai assessment, the ranking of the web page, and the use of matrix calculations — computers are very good at matrix multiplication, which is much more efficient than solving a bunch of equations。