The search engine is a computer program to climb and track links between web pages. Information is organized and processed to provide search services to users and to present relevant information retrieved to the user system. Netizen enter keyword display in search box

1. The search engine is a computer program to climb and track links between web pages. Information is organized and processed to provide search services to users and to present relevant information retrieved to the user system. Netizens enter keywords in the search box to show search result information, which is ranked after the search engine。

2. Common search engine (receivable below)

There are now 100-degree search engines, 360 search engines, google search engines, dog search engines, and searches inside websites, such as micro-intelligence at the mobile end, all of which have search engines。

What's a search engine marketing

By definition, the marketing of search engines carries out marketing activities by studying the search behaviour of internet users and by presenting quick and accurate marketing information on the search results page. In short, it is web marketing using search engines。

If users search for product keywords in the search engine, find your website and click on access, you have attracted a visitor through the search engine, and if you want to attract more visitors to your site by searching keywords, you have to take specific actions to use the search engine to attract more visitors, which is the marketing of the search engine。

There are two main search results: one, natural search result 2, and paid search results

Natural results of searches

“natural search results” refers to the most relevant results of keywords that are naturally found by users when searching for keywords, and here we need to know that seo not only helps your website to appear in keyword search results, but also helps to improve the sequencing of results。

In fact, when it comes to search results, most people refer to nature search results and 60 per cent of visitors go to bottom search results because this is the web page most relevant to their search for keywords. So natural search results are an important part of the marketing of search engines. While this has taken a long time and effort, it has had lasting results and has resulted in effective savings in the corporate budget。

Ii. Results of the fee search

Many search sites profit from the results of fee-based searches. Search results are generated mainly through fees, and when users search keywords, their own web-based information appears in search results. This approach can attract visitors quickly and, while it works well, it requires substantial budgetary support。

Whether the free search engine is optimized (seo) or the paid search engine bid (sem), the marketing of the search engine is an important strategy for web marketing, and many businesses are trying to present their marketing information on 100-degree front pages to gain more exposure and thus more users。

100 degrees of search engine principle

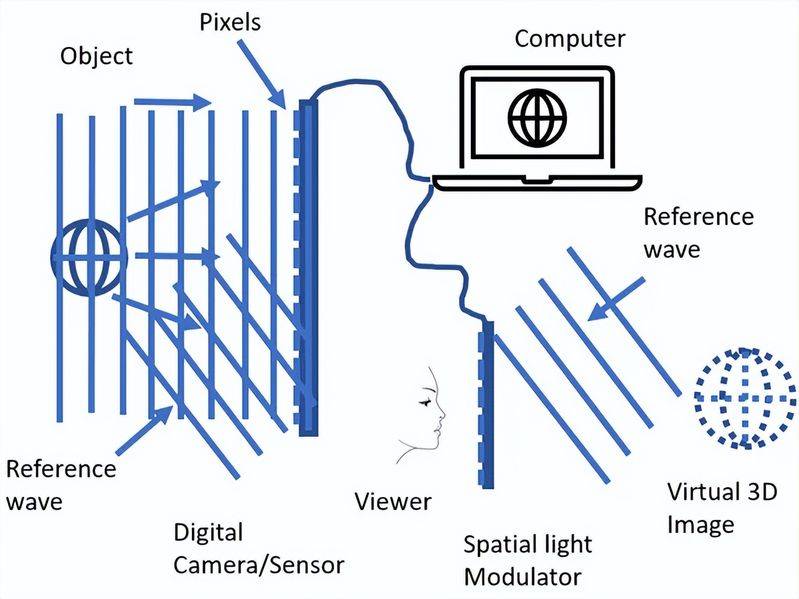

Baiduspider is an automated program for the 100-degree search engine, which serves to access web pages on the internet, create index databases and enable users to search pages on your site in the 100-degree search engine。

The explosive growth of information on the internet and how it can be accessed and used effectively are at the forefront of the search engine effort. As an upstream of the entire search system, the data capture system, which is primarily responsible for the collection, preservation and updating of internet information, climbs and crawls like spiders across the network and is usually called “spider”。

Spider starts with some important torrent urls, constantly discovers new urls and grabs them through hyperlinks on the page, capturing as many valuable web pages as possible. For a large, 100-degree-like spider system, there is a possibility that the web page will be modified, deleted or new hyperlinks will occur every hour, so that the page that spider has taken in the past will be updated and a url library and a library will be maintained。

The magnitude of internet resources requires that capture systems use bandwidth as efficiently as possible and that valuable resources are captured as much as possible with limited hardware and bandwidth resources。

There is a large number of data that search engines are temporarily unable to access on the internet, known as dark web data. On the one hand, the large amount of data available on many web sites is found in web databases, and it is difficult for spider to get complete content by accessing pages; on the other hand, problems with the web environment, the website itself are irregular, and isolated islands, among others, can make search engines inaccessible. For the time being, the main idea of access to darknet data continues to be to be addressed through the use of data submissions on open platforms, such as the 100-degree platform, the 100-degree open platform, etc

Spider often encounters so-called black holes or is troubled by a large number of low-quality pages during the capture process, which also requires the design of a well-designed catch-and-take anti-fraud system in the capture system. Examples include analysis of url features, analysis of page size and content, analysis of site size to capture scale, etc。

Data submitted through the "new content interface" with the bear palm can be captured and displayed within 24 hours after quality verification, but with a fixed daily submission quota limit; (for smes, the submission of quotas is sufficient)

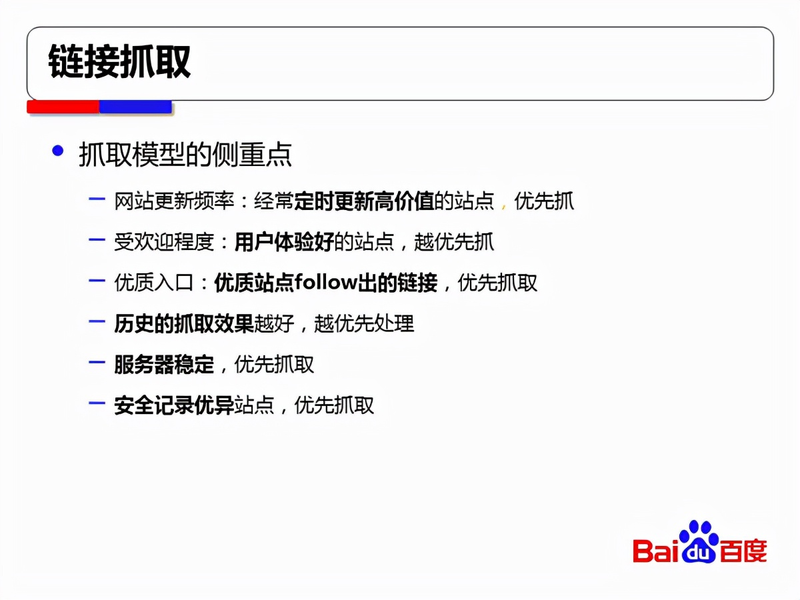

Some of the factors that affect this link are:

1. Website ban. Don't laugh, there's a schoolboy who's blocking 100-degree spiders while he's giving data to 100-degrees。

2. Quality screening. After 100 degrees of spider entered 3. 0, a new step was taken in the identification of low-quality content, particularly in terms of time-sensitive content, from which time quality assessment screening began and a large number of pages were filtered out, such as over-optimization. The reason why most of the pages were not displayed was that they were not of high quality。

3. Capture failed. There are many reasons for the failure to capture, and sometimes your visits to the office are completely unproblematic, while 100 degree spider is in trouble, and the site needs to keep an eye on the stability of the site at different times。

4. Quota restrictions. Although we are gradually liberalizing the snap quota, if the number of site pages suddenly explodes, it will affect the capture of high-quality links, so that the site, in addition to ensuring stable access, will also focus on website security and preventing black injections。

Search engine search overview

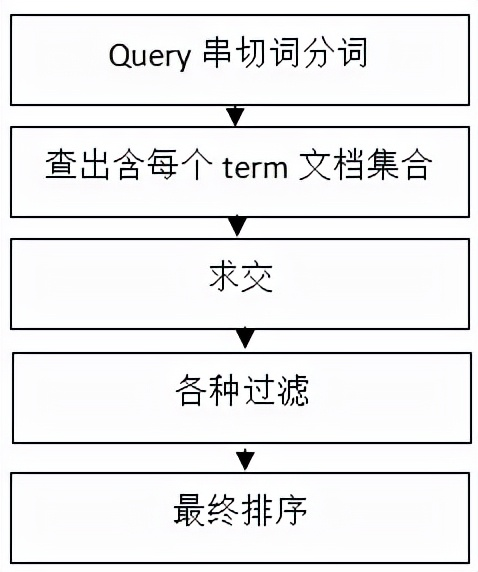

The indexing system of the search engine has been outlined earlier, and in fact a library-entry process is needed at the end of the back-to-back indexing process, and in order to improve efficiency the process also requires the preservation of all terms and offsets on the head of the document, as well as the compression of the data, which is too technical to mention here. A brief introduction to the search system after the index is made today。

The search system consists of five main components, as shown in the figure below:

1. Query crosswords are to be separated from the user's query to prepare for subsequent queries。

2. Identification of a collection of documents containing each term, i. E., identification of a collection to be selected - yeah

3. Request for surrender, above, document 2 and document 9 may be what we need to find, and the whole process is actually related to the performance of the system, which includes performance optimization using such means as caches

4. Various types of filters, such as those that may include filtering out dead chains, duplicate data, pornography, trash results and what you understand

5. Finalally sequenced results that best meet users ' needs, possibly including useful information such as the overall evaluation of the website, the quality of the web page, the quality of content, the quality of resources, matchability, dispersion, timeliness, etc., will be presented in detail。

Bear

It's been 100 times without the bear hand, and now with the bear hand, it's a web-enabled device

With regard to the traditional “link submission” tool, and the “additional content interface” of the current bear palm, there are some differences that require the attention of station chiefs:

Data submitted through the link submission tool can speed up reptile-to-data capture without a daily quota limit

Data submitted through the bear hand “additional content interface” can be captured and displayed within 24 hours after quality verification, but with a fixed daily submission quota limit; (for smes, the submission quota is fully adequate)

Therefore, with regard to sites with larger daily production content, we suggest that you submit data exceeding the quota for submission of bear palms through the "historical content interface" or the "link submission" tool in the station master tool。

So much has been said about the principles of the 100-degree search engine, and i hope it will help you。